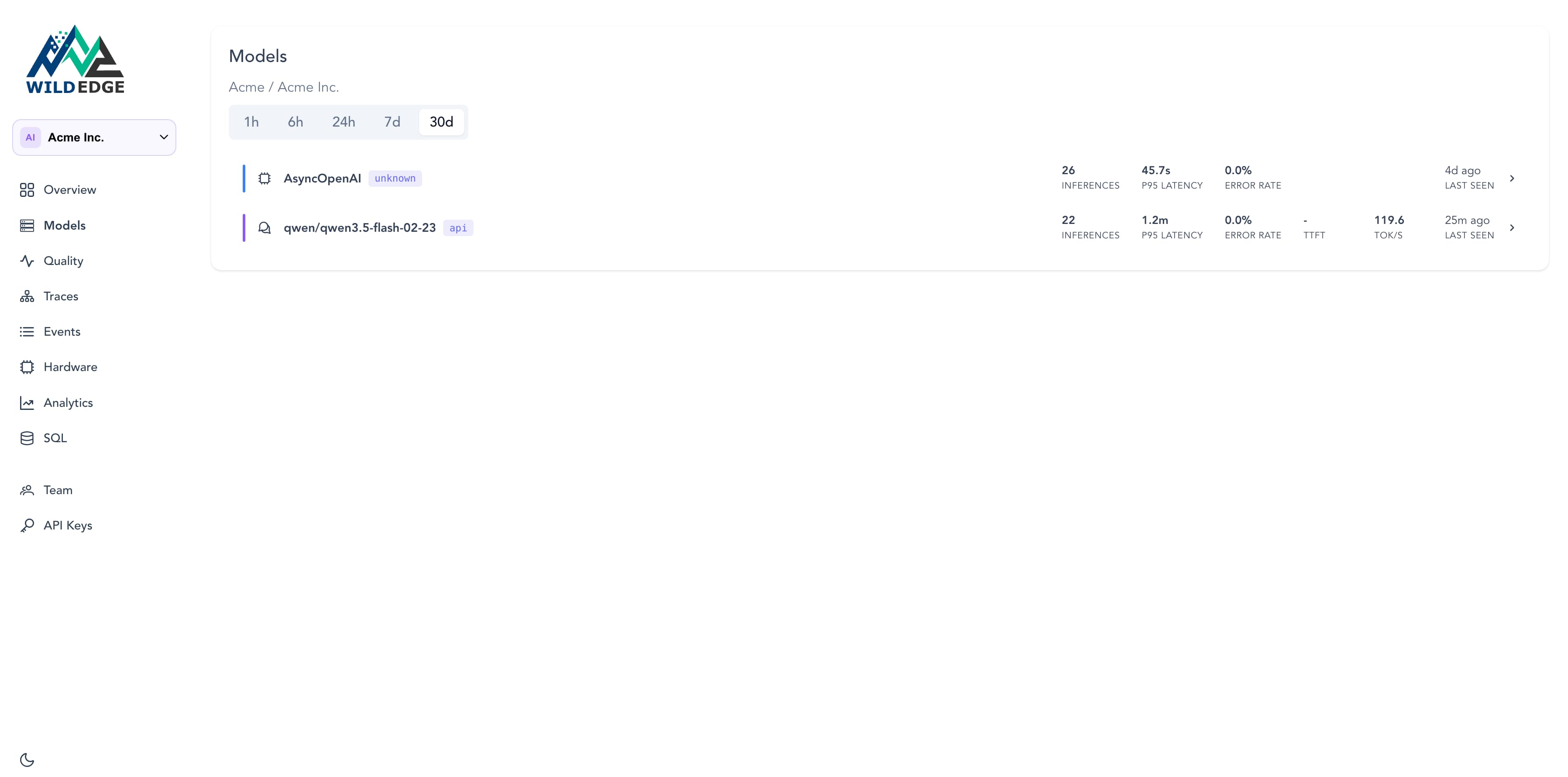

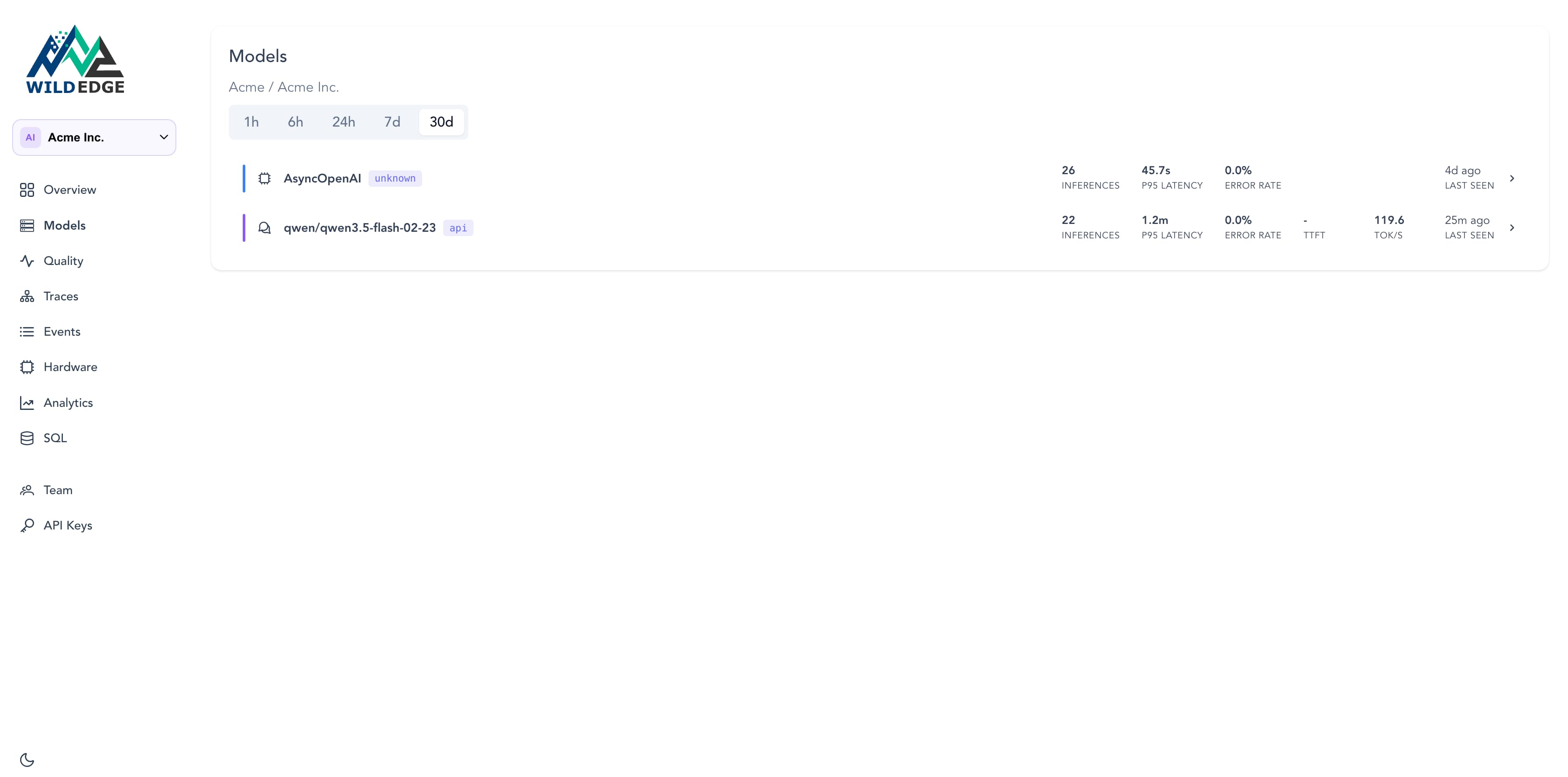

Custom, self-hosted, and remote API models side by side. Sorted by performance, filterable by type.

Measure and improve how your AI performs in production, across edge devices, self-hosted runtimes, and remote APIs.

95% of AI pilots fail to reach production. Models that can't be observed can't improve.

No credit card required · Up and running in minutes

$ wildedge run -- python app.pyPython today. More runtimes on the way.

Wild Edge shows you exactly where and when your model starts drifting.

Monitor token throughput, TTFT, KV cache usage, and behavioral drift.

Latency, token cost, error rate and behavioral drift across every provider.

Traces tie the whole sequence together: timing, tokens, and error state per step, across every model call regardless of where it runs.

Custom, self-hosted, and remote API models side by side. Sorted by performance, filterable by type.

Start free. Upgrade when you need more.

Do you know how they're behaving? Set up Wild Edge in 5 minutes and find out. Dev, staging, or production.

No credit card · Works across Python, on-device runtimes, and remote APIs